The Simplest Guide to Neural Machine Translation 2026

Neural machine translation has transformed how businesses communicate across languages in 2026. With average accuracy reaching 94.2% across major language pairs, NMT technology has evolved from an experimental tool to an essential business solution. This comprehensive guide explains neural machine translation in simple terms, helping general audiences, business stakeholders, and SMEs understand how this technology can enhance their global communication strategies.

Unlike previous translation methods, neural machine translation processes entire sentences simultaneously, capturing context and producing more natural-sounding output. As companies increasingly adopt AI translation technology to expand into international markets, understanding NMT’s capabilities and limitations becomes essential for making informed decisions.

What is Neural Machine Translation?

Neural machine translation uses neural networks to provide accurate translations in various languages. Computer programmes employing NMT automatically translate text from one language to another, leveraging patterns learnt from large datasets. The system mimics human translation processes at accelerated speeds, using interconnected layers of nodes that encode source text, decode it into the target language, and employ attention mechanisms to ensure contextually accurate translations.

NMT represents a fundamental shift from earlier translation approaches. Rather than translating word-by-word or phrase-by-phrase, neural machine translation analyses entire sentences holistically. This sentence-level processing enables the system to understand context, handle idiomatic expressions more effectively, and produce output that reads naturally in the target language.

The technology has matured significantly since its mainstream introduction in 2016. When Google deployed neural machine translation, the system reduced errors by 60% compared to previous phrase-based approaches. Today, NMT holds 48.67% of the translation market share, demonstrating its widespread adoption across industries.

What is a Neural Network?

A neural network is an artificial intelligence system inspired by the human brain’s structure. It consists of interconnected nodes (or “neurons”) organised in layers that process information. These networks learn patterns from data through training, adjusting their internal parameters to improve performance over time.

In the context of translation, neural networks perform two primary functions. First, they encode the source language text into numerical representations that capture meaning. Second, they decode these representations into the target language, selecting appropriate words and grammatical structures. The network’s layers work together to transform input text into accurate translations, with each layer extracting increasingly sophisticated linguistic features.

The power of neural networks lies in their ability to learn complex relationships directly from data. Rather than requiring manually coded rules, these systems discover translation patterns by analysing millions of sentence pairs during training. This data-driven approach enables NMT to handle linguistic nuances that would be nearly impossible to capture through traditional programming methods.

What is Deep Learning Translation?

Deep learning translation employs deep neural networks—networks with multiple hidden layers—to perform machine translation tasks. The “deep” aspect refers to the numerous processing layers that transform source text into target translations. Each layer extracts progressively abstract representations of meaning, enabling the system to understand complex linguistic structures.

Deep learning translation differs from earlier statistical approaches in fundamental ways. Statistical machine translation relied on explicitly programmed rules and phrase tables. Deep learning systems, by contrast, learn translation strategies automatically from data. They develop internal representations of grammar, semantics, and context without explicit instruction about linguistic structure.

This approach delivers several advantages. Deep learning models capture long-range dependencies in sentences, maintaining coherence across lengthy passages. They handle multiple language pairs efficiently, often enabling zero-shot translation between languages not explicitly trained together. Most importantly, deep learning translation produces more fluent output that closely resembles human-written text.

The technology underlying modern AI translation works by combining natural language processing with deep neural architectures. Transformer models, the current state-of-the-art approach, use attention mechanisms to process entire input sequences simultaneously, dramatically improving both speed and translation quality compared to earlier recurrent architectures.

Types of Machine Translation

Understanding the evolution of machine translation helps contextualise NMT’s advantages. Four primary approaches have emerged over decades of development, each with distinct methodologies and applications.

Rule-Based Machine Translation (RBMT)

Rule-based machine translation, the earliest approach, translates content using grammatical rules and bilingual dictionaries. Language experts manually create extensive rule sets that map source language structures to target language equivalents. RBMT systems analyse input text grammatically, identify word functions, and apply predetermined transformation rules.

Whilst predictable and consistent for simple translations, RBMT faces significant limitations. Creating comprehensive rule sets requires enormous manual effort. The systems struggle with idiomatic expressions, context-dependent meanings, and linguistic ambiguity. Modern applications primarily use RBMT for domain-specific scenarios where controlled vocabulary ensures predictable input.

Statistical Machine Translation (SMT)

Statistical machine translation improved upon rule-based systems by learning translation patterns from bilingual text corpora. SMT systems analyse parallel texts to calculate statistical probabilities for word and phrase translations. Given an input sentence, the system selects the most statistically likely translation based on patterns observed in training data.

SMT offered greater flexibility than RBMT, requiring less manual rule creation. However, it still struggled with context, often producing grammatically awkward output. Phrase-based SMT, the most common variant, segments source text and compares segments against bilingual databases, calculating the most probable target language equivalents.

Neural Machine Translation (NMT)

Neural machine translation represents the current gold standard, processing entire sentences simultaneously rather than word-by-word. NMT uses encoder-decoder architectures with attention mechanisms, enabling the system to focus on relevant source words when generating each target word. This holistic approach dramatically improves fluency and accuracy.

The shift to neural approaches delivered transformative improvements. NMT systems capture subtle linguistic nuances, handle idiomatic expressions more effectively, and produce natural-sounding translations. They learn continuously from data, improving performance without manual intervention. These advantages explain why leading platforms like Google Translate and DeepL now rely exclusively on neural architectures.

Hybrid Machine Translation (HMT)

Hybrid machine translation integrates multiple approaches—typically combining RBMT, SMT, and NMT—to leverage each method’s strengths. Serial hybrid systems process text through rule-based engines before applying statistical smoothing. Parallel hybrid systems run multiple engines simultaneously, combining outputs to generate final translations.

Hybrid approaches offer flexibility for specific use cases. Organisations might employ rule-based systems for controlled terminology, statistical methods for domain-specific content, and neural systems for general text. However, maintaining multiple engines increases complexity. Most modern implementations now favour pure neural approaches, occasionally supplemented with terminology management systems rather than fundamentally different translation engines.

How is Neural Machine Translation Different?

Neural machine translation distinguishes itself through several fundamental characteristics that set it apart from earlier approaches. These differences explain NMT’s superior performance and growing dominance in multilingual technology solutions.

Sentence-Level Processing

Traditional systems translate incrementally, processing words or short phrases in isolation. Neural machine translation analyses entire sentences simultaneously, capturing relationships between all words regardless of distance. This holistic view enables NMT to maintain context throughout translation, producing more coherent output.

Consider translating “The bank was closed yesterday.” Statistical systems might incorrectly translate “bank” as a financial institution or riverbank depending on phrase-table entries. Neural systems examine the entire sentence, using contextual clues to determine the correct interpretation.

Continuous Learning

RBMT requires manual rule updates, whilst SMT demands retraining with new parallel corpora. Neural machine translation improves continuously through adaptive learning. Systems can incorporate feedback from human editors, refining translations without complete retraining. This capability makes NMT particularly suitable for organisations requiring evolving terminology and style adaptation.

Fluency and Naturalness

Early machine translation produced stilted, grammatically awkward output. Neural systems generate translations that closely resemble human writing. By learning language patterns from vast datasets, NMT captures stylistic conventions, idiomatic expressions, and natural phrasing that earlier systems missed. This fluency proves essential for customer-facing content where professional presentation matters.

End-to-End Architecture

Statistical machine translation required separate components: alignment models, language models, reordering systems, and more. Neural translation integrates all functions within a single neural network trained jointly. This unified architecture simplifies deployment, reduces memory requirements, and enables more sophisticated optimisation during training.

Contextual Understanding

Neural networks develop internal representations of meaning that extend beyond surface-level word matching. They capture semantic relationships, grammatical structures, and discourse patterns implicitly through training. This deep contextual understanding enables NMT to handle ambiguous language, resolve pronoun references, and maintain consistency across long documents more effectively than previous approaches.

When to Use Neural Machine Translation

Neural machine translation excels in specific scenarios where its strengths align with business requirements. Understanding these optimal use cases helps organisations deploy NMT effectively whilst recognising situations requiring alternative approaches.

High-Volume, Fast Turnaround Content

When organisations face millions of words requiring translation within short timeframes, NMT becomes invaluable. E-commerce platforms translating product descriptions, customer support systems handling multilingual queries, and media companies localising content benefit enormously from NMT’s speed. The technology processes text far faster than human translators, enabling real-time or near-real-time translation.

Companies implementing machine translation plus post-editing services achieve productivity gains of 35-63% compared to translation from scratch, whilst maintaining quality through human oversight.

Repetitive, Technical Documentation

Neural machine translation performs exceptionally well with technical manuals, software documentation, and standardised content. These materials typically use controlled vocabulary and consistent terminology—ideal conditions for NMT. Custom-trained engines learn domain-specific language patterns, producing accurate first drafts that require minimal editing.

Industries such as manufacturing, technology, and pharmaceuticals leverage NMT for user manuals, API documentation, and regulatory submissions. The technology’s ability to maintain terminology consistency across extensive document sets proves particularly valuable.

Real-Time Communication

Chat systems, customer service platforms, and live collaboration tools increasingly rely on neural translation for instant multilingual communication. Modern NMT engines process sentences within milliseconds, enabling natural conversation flow across language barriers. Businesses operating globally use these capabilities to provide customer support without requiring multilingual staff.

The emergence of real-time AI transcription combined with NMT enables organisations to offer live translation for meetings, conferences, and webinars, fostering inclusive communication across diverse teams.

Privacy-Sensitive Applications

Organisations handling confidential data increasingly deploy on-premises NMT systems to maintain data sovereignty. Government agencies, healthcare providers, and financial institutions can translate sensitive documents without transmitting content to third-party services. Custom neural engines running on internal infrastructure provide translation capabilities whilst ensuring compliance with privacy regulations like GDPR and HIPAA.

Content Requiring Terminology Consistency

Legal documents, contracts, patents, and branded content demand precise, consistent terminology. Neural systems trained on organisation-specific glossaries maintain terminology accuracy across translations. Translation memory integration ensures previously translated terms remain consistent, protecting brand identity and legal precision.

Situations Requiring Human Translation

Neural machine translation proves less suitable for creative content, marketing slogans, legal contracts requiring absolute precision, and culturally sensitive materials. Whilst NMT handles technical accuracy well, it struggles with creative adaptation, cultural nuance, and the subtle persuasive elements crucial for marketing. High-stakes applications benefit from hybrid solutions combining NMT speed with human expertise.

How Does Neural Machine Translation Work

Understanding NMT’s internal mechanisms helps organisations appreciate its capabilities and limitations. Modern neural machine translation employs encoder-decoder architectures with attention mechanisms—sophisticated systems that transform source text into target translations through multiple processing stages.

The Encoder-Decoder Architecture

Neural machine translation systems comprise two primary components working in sequence. The encoder processes input text, converting words into numerical representations called embeddings. These embeddings capture semantic meaning, enabling the system to represent concepts mathematically. As the encoder processes each word, it generates contextualised representations that account for surrounding words.

The encoder outputs a compressed representation of the entire source sentence—essentially a numerical summary capturing the input’s meaning. This context vector serves as the foundation for translation, containing all information the decoder needs to generate output.

The decoder takes the encoder’s output and generates the target translation one word at a time. At each step, the decoder predicts the most probable next word based on the source context and previously generated target words. This auto-regressive process continues until the system produces a complete translation.

Attention Mechanisms

Early encoder-decoder systems compressed entire sentences into fixed-length vectors, losing information in long passages. Attention mechanisms solved this limitation by allowing the decoder to focus on different source words when generating each target word.

Rather than relying solely on a single context vector, attention-enhanced systems compute weighted combinations of all encoder outputs. When translating “The girl rides the bike” into French, the system might focus heavily on “girl” when generating “fille” whilst attending to “rides” when producing “monte.” This dynamic focus dramatically improves translation quality, particularly for long sentences.

Attention mechanisms provide an additional benefit: interpretability. By examining attention weights, researchers can understand which source words influenced specific translation choices. This transparency helps identify systematic errors and guides model improvements.

Training Process

Neural machine translation systems learn through supervised training on parallel corpora—millions of sentence pairs in source and target languages. During training, the system attempts to translate source sentences, compares its outputs against reference translations, and adjusts internal parameters to reduce errors.

Modern training employs sophisticated optimisation algorithms that gradually refine billions of network parameters. The process requires substantial computational resources—high-performance GPUs and extensive training time—but produces systems capable of translating across diverse domains and language pairs.

Advanced techniques like back-translation, where systems translate target-language text back to source languages to create synthetic training data, help improve performance for low-resource language pairs. Transfer learning enables systems trained on high-resource languages to perform reasonably well on related languages with limited parallel data.

The Translation Pipeline

When translating new text, NMT systems follow a multi-step pipeline:

- Tokenisation: Input text is split into tokens—typically words or subword units. Subword tokenisation enables systems to handle rare words by breaking them into familiar components.

- Encoding: The encoder processes tokens sequentially, building contextualised representations for each word.

- Attention computation: The attention mechanism calculates relevance scores between source and target positions.

- Decoding: The decoder generates target words one by one, using attention-weighted source representations and previously generated target words.

- Detokenisation: Output tokens are converted back into readable text, with appropriate spacing and formatting.

This efficient pipeline enables website localisation systems to process content rapidly whilst maintaining translation quality across extensive documentation.

Advantages of Neural Machine Translation

Neural machine translation delivers substantial benefits that explain its rapid adoption across industries. These advantages make NMT an essential tool for organisations operating in multilingual markets.

Superior Fluency and Naturalness

Neural systems produce translations that sound significantly more natural than earlier approaches. By learning language patterns from massive datasets, NMT captures stylistic conventions and idiomatic expressions, generating output that closely resembles human writing. This fluency proves crucial for customer-facing content where professional presentation impacts brand perception.

Businesses leveraging content and document translation services benefit from NMT’s ability to maintain the source text’s tone and style whilst adapting naturally to target language conventions.

Contextual Accuracy

NMT’s sentence-level processing enables superior contextual understanding. The systems resolve ambiguous words, handle pronoun references correctly, and maintain consistency across sentences. This contextual awareness reduces errors that plagued earlier word-by-word translation approaches.

The technology’s attention mechanisms allow models to focus on relevant context when translating each word, dramatically improving accuracy for complex sentences with multiple clauses or long-range dependencies.

Continuous Improvement

Neural systems learn and improve over time through adaptive mechanisms. Organisations can fine-tune models with domain-specific data, teaching systems industry terminology and stylistic preferences. Post-editing feedback helps systems recognise and correct recurring errors without complete retraining.

This capability makes NMT particularly valuable for businesses with evolving product lines or terminology. Systems adapt to new concepts, maintaining translation quality as organisations grow and change.

Speed and Scalability

Neural machine translation processes text dramatically faster than human translators. Modern systems translate thousands of words per second, enabling real-time applications and rapid turnaround for high-volume projects. This speed advantage becomes essential for organisations facing tight deadlines or requiring instant multilingual access to content.

The technology scales efficiently—once deployed, NMT systems handle increasing volumes without proportional cost increases, making them economically attractive for large-scale operations.

Reduced Memory Requirements

Unlike statistical systems requiring extensive phrase tables and language models, neural networks store translation knowledge in compact parameter sets. This efficiency reduces storage requirements and simplifies deployment, particularly for mobile or embedded applications where memory constraints matter.

Cost Effectiveness

By automating initial translation, NMT significantly reduces project costs. Organisations implementing hybrid translation solutions that combine machine translation with human post-editing achieve cost savings of 20-60% compared to traditional translation, whilst maintaining quality through expert review.

The economic advantages prove particularly compelling for businesses translating repetitive content, technical documentation, or high-volume customer communications.

Multi-Language Capability

Modern neural systems handle dozens or hundreds of language pairs with single models. Meta’s NLLB-200 model translates across 200 languages, enabling direct translation without intermediary languages. This multilingual capability simplifies infrastructure and enables rapid expansion into new markets without deploying separate systems for each language pair.

Integration with Business Workflows

Neural translation integrates seamlessly with content management systems, translation management platforms, and business applications. APIs enable automated translation within existing workflows, reducing manual intervention. This integration capability makes NMT practical for organisations requiring translation at scale within established operational processes.

Limitations and Challenges of NMT

Despite impressive capabilities, neural machine translation faces significant limitations that organisations must understand when deploying the technology. Recognising these constraints helps businesses set realistic expectations and implement appropriate quality controls.

Data Requirements

Neural systems require massive training datasets—typically millions of parallel sentences—to achieve acceptable performance. Low-resource languages with limited digital content struggle to support effective NMT development. Even major languages need domain-specific training data for specialised applications, creating barriers for organisations without access to extensive parallel corpora.

The quality of training data matters enormously. “Garbage in, garbage out” applies directly to NMT. Systems trained on low-quality materials reproduce errors and non-idiomatic patterns, potentially degrading output quality.

Computational Costs

Training neural machine translation systems demands substantial computational resources. High-performance GPUs, extensive training time, and significant electricity consumption make NMT development expensive. Whilst inference (actual translation) runs efficiently, maintaining and updating custom models requires ongoing investment in computing infrastructure.

Organisations implementing on-premises systems for data privacy must invest in appropriate hardware—typically GPUs with 8GB+ memory and supporting infrastructure. Cloud-based alternatives reduce capital requirements but introduce recurring operational costs.

Handling of Ambiguous Language

Neural systems struggle with highly ambiguous text requiring deep contextual or cultural knowledge. Idioms, wordplay, cultural references, and context-dependent meanings can confuse NMT, producing awkward or incorrect translations. The technology performs best with clear, unambiguous source text.

Creative content, marketing slogans, and literary works often suffer in machine translation because subtlety and emotional resonance prove difficult for neural networks to capture. These materials typically require human expertise to preserve intended impact.

Consistency Challenges

Whilst individual sentence translations may be accurate, NMT can produce inconsistencies across documents. Terminology might be translated differently in separate passages, formality levels may vary inappropriately, and document-level coherence sometimes suffers. Sentence-by-sentence processing limits awareness of broader discourse structure.

This limitation makes NMT less suitable for legal documents, technical specifications, and other materials where absolute consistency matters. App and software localisation projects particularly benefit from human oversight to maintain interface terminology consistency.

Long Sentence Difficulties

Despite improvements, neural systems can struggle with very long sentences containing multiple clauses and complex grammatical structures. Translation quality often degrades as sentence length increases, with systems occasionally producing incomplete or incoherent output for particularly lengthy input.

Organisations can mitigate this limitation by preprocessing content to break excessively long sentences into shorter units before translation.

Lack of Cultural Nuance

Neural machine translation typically misses cultural subtleties that human translators instinctively adapt. Regional preferences, culturally specific concepts, and sociolinguistic appropriateness often fall outside NMT capabilities. The systems lack cultural awareness and real-world knowledge that inform human translation decisions.

Marketing materials requiring cultural adaptation—often called transcreation—generally need human translators who understand both source and target cultures deeply.

Rare Word Handling

Whilst subword tokenisation helps, NMT still struggles with very rare words, proper nouns, and recently coined terms not present in training data. The systems may mistranslate names, technical terminology, or neologisms, requiring human review to catch and correct such errors.

Custom terminology databases and frequent model updates help mitigate this issue but cannot eliminate it entirely.

Opacity and Explainability

Neural networks function as “black boxes”—their internal decision-making processes remain opaque. When NMT produces incorrect translations, understanding why proves difficult. This lack of explainability complicates debugging and makes it challenging to predict when systems will fail.

Human post-editors must carefully review output rather than assuming correctness, particularly for high-stakes applications where errors carry serious consequences.

Best Practices for Incorporating NMT into Your Workflows

Successful neural machine translation implementation requires careful planning and strategic integration. These best practices help organisations maximise NMT benefits whilst mitigating limitations.

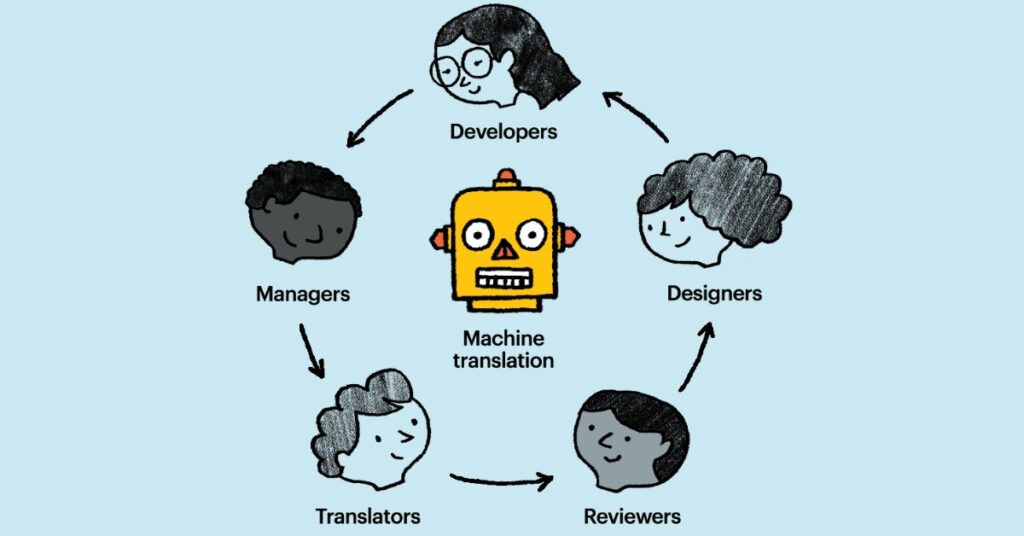

Start with Hybrid Approaches

Combining neural translation with human post-editing delivers optimal results. Use NMT to generate initial drafts, then engage qualified linguists to refine output. This hybrid approach captures NMT’s speed and cost advantages whilst ensuring human experts address nuances, cultural adaptation, and quality verification.

Post-editing typically reduces translation time by 35-63% compared to translating from scratch, delivering substantial productivity gains whilst maintaining quality. Organisations should provide post-editors with appropriate training, as editing machine output requires different skills than traditional translation.

Implement Quality Evaluation

Establish robust quality assessment processes rather than assuming NMT output meets standards. Use both automated metrics (BLEU, COMET) and human evaluation to monitor translation quality. COMET scores correlate better with human judgements than traditional BLEU metrics, providing more reliable quality assessment.

Regular quality audits help identify systematic errors, enabling targeted improvements. Collecting feedback from post-editors creates valuable data for model refinement.

Use Translation Memories

Integrate NMT with translation memory systems to maintain consistency. Translation memories store previously translated segments, ensuring identical source text receives identical translations across projects. This integration proves essential for technical documentation, user interfaces, and branded content where terminology consistency matters.

Modern translation portals combine translation memory, terminology management, and neural machine translation in unified platforms, streamlining multilingual content production.

Maintain Terminology Databases

Create and maintain glossaries defining critical terminology translations. Neural systems respect terminology constraints when properly configured, ensuring product names, technical terms, and branded vocabulary translate consistently. Regular glossary updates keep systems aligned with evolving organisational language.

Terminology management proves particularly crucial for regulated industries where specific terms carry legal or technical significance.

Optimise Source Content

NMT performs best with clear, well-written source text. Implement controlled language guidelines that promote clarity, reduce ambiguity, and use consistent terminology. Simplify sentence structures, avoid idioms in technical documentation, and maintain consistent style.

Pre-editing source content improves machine translation quality and reduces post-editing effort, creating efficiency gains throughout the workflow.

Select Appropriate Content Types

Assess which content types suit neural translation and which require human expertise. Route repetitive technical documentation, customer support materials, and internal communications through NMT. Reserve human translation for marketing campaigns, legal contracts, creative content, and culturally sensitive materials.

Content classification helps organisations optimise resource allocation, applying NMT where it delivers maximum value whilst engaging human expertise where necessary.

Provide Context to Post-Editors

Equip human reviewers with context about content purpose, target audience, and quality requirements. Post-editors perform better when they understand how translations will be used and what standards apply. Provide reference materials, style guides, and access to subject matter experts.

Effective multilingual retail and e-commerce solutions depend on post-editors understanding both product context and customer expectations in target markets.

Implement Continuous Improvement

Treat NMT deployment as an iterative process rather than a one-time implementation. Collect post-edit data, analyse error patterns, and periodically retrain or fine-tune models with improved data. Adaptive systems that learn from corrections improve over time, gradually reducing post-editing requirements.

Monitor performance metrics, gather user feedback, and make data-driven decisions about model updates and workflow refinements.

Ensure Data Privacy and Security

Implement appropriate security measures when handling sensitive content. For confidential materials, consider on-premises NMT deployment or private cloud solutions rather than public translation APIs. Ensure compliance with data protection regulations relevant to your industry and jurisdictions.

Evaluate vendor security practices carefully, including data encryption, access controls, and retention policies.

Train Teams Appropriately

Provide training for both content creators and post-editors. Content creators should understand how to write MT-friendly source text. Post-editors need specialised skills for efficiently reviewing and correcting machine output. Project managers benefit from understanding NMT capabilities and limitations to make informed resource allocation decisions.

Investing in team capability development maximises return on NMT technology investments.

Popular Use Cases for Neural MT Today

Neural machine translation delivers value across diverse industries and applications. These real-world use cases illustrate NMT’s practical impact on business operations.

E-Commerce Product Descriptions

Online retailers translate millions of product listings using neural machine translation. The technology’s speed enables rapid market expansion, allowing platforms to offer localised shopping experiences across dozens of languages. NMT handles repetitive product specifications efficiently, whilst human review focuses on marketing copy requiring persuasive language.

Major e-commerce platforms report significant increases in international sales after implementing e-commerce localisation powered by neural translation, demonstrating measurable business impact.

Customer Support Systems

Multilingual customer service operations leverage NMT for real-time chat translation and support ticket processing. The technology enables small support teams to serve global customer bases without requiring fluency in dozens of languages. Knowledge bases, FAQs, and help documentation translate automatically, ensuring consistent information across languages.

Real-time translation enables support agents to communicate with customers in their preferred languages, improving satisfaction and resolution rates.

Technical Documentation

Software companies, manufacturers, and technology firms use neural translation for user manuals, API documentation, and technical specifications. These materials’ repetitive nature and controlled vocabulary suit NMT exceptionally well. Custom-trained engines learn domain terminology, producing accurate first drafts requiring minimal editing.

The technology dramatically reduces time-to-market for multilingual product releases, enabling simultaneous launches across markets.

Internal Communications

Multinational organisations deploy NMT for internal memos, policy documents, and corporate communications. The technology facilitates information sharing across globally distributed teams, ensuring employees access critical information in their preferred languages. Automated translation reduces delays and costs compared to traditional translation workflows for internal content.

News and Media

Media organisations use neural translation to repurpose content across language markets rapidly. Breaking news translates automatically, extending reach to international audiences within minutes. Whilst human editors typically review output before publication, NMT enables much faster initial translation than manual approaches.

Educational Content

Educational institutions and e-learning platforms leverage NMT to make course materials accessible to diverse linguistic communities. Lecture transcripts, reading materials, and assessments translate efficiently, reducing barriers to educational access. The technology supports multilingual pedagogy at scale.

Legal and Regulatory Documents

Law firms and compliance departments increasingly use neural translation with human review for contracts, regulatory filings, and legal correspondence. Whilst legal translations require absolute accuracy—necessitating expert review—NMT accelerates initial drafting significantly. Terminology databases ensure consistent translation of legal concepts across documents.

Organisations should engage qualified legal translation specialists to review machine-translated legal materials before use in official contexts.

Marketing Localisation

Marketing teams use NMT for initial drafts of multilingual campaigns, which creative teams then adapt for cultural relevance. Whilst marketing materials typically require significant human intervention, neural translation accelerates the process by providing starting points rather than blank pages. This approach works particularly well for marketing localisation projects with tight deadlines.

Healthcare Communications

Medical institutions deploy NMT for patient information leaflets, appointment reminders, and general health information. The technology helps healthcare providers serve diverse linguistic communities more effectively. Clinical documents requiring absolute precision receive human translation, whilst general informational content leverages machine translation with light review.

Software and App Localisation

Technology companies use neural translation to localise user interfaces, error messages, and in-app content. NMT’s speed enables rapid iteration during development cycles. Terminology management ensures interface consistency, whilst contextual translation adapts messages appropriately for each locale.

NMT vs. Other Types of Machine Translation

Understanding how neural machine translation compares to alternative approaches helps organisations select appropriate technologies for specific needs.

NMT vs. Statistical Machine Translation

Statistical machine translation, dominant before 2016, uses probabilistic models trained on parallel corpora. SMT analyses phrase-level translation patterns, selecting the most statistically probable target-language phrases for source segments. Neural approaches process entire sentences simultaneously, capturing longer-range dependencies.

- Quality Comparison: NMT produces significantly more fluent output than SMT. Google’s 2016 deployment reduced translation errors by 60% compared to their previous statistical system. Neural systems handle idiomatic expressions, context-dependent meanings, and grammatical agreement more effectively.

- Resource Requirements: SMT requires extensive phrase tables and language models, consuming substantial memory. Neural networks store translation knowledge more compactly, reducing deployment footprint. However, NMT demands more computational power during training.

- Customisation: Both approaches support domain adaptation, but neural systems adapt more efficiently through fine-tuning. SMT customisation requires rebuilding phrase tables from domain-specific corpora, whilst NMT fine-tunes existing models with smaller datasets.

- Current Status: Statistical approaches have largely become obsolete for general translation. Most major providers transitioned to neural systems by 2017, with SMT now relegated to niche applications or historical systems.

NMT vs. Rule-Based Machine Translation

Rule-based systems apply manually coded grammatical rules and bilingual dictionaries to translate text. RBMT analyses source sentences grammatically, applies transformation rules, and generates target-language output following target grammar rules.

- Quality Comparison: Neural translation dramatically outperforms rule-based approaches for general content. RBMT produces literal, often unnatural translations that lack fluency. Neural systems capture idiomatic language and natural phrasing that RBMT cannot replicate.

- Predictability: RBMT offers consistent, predictable output—the same input always produces identical translations. Neural systems may generate slightly different translations for repeated inputs, though typically within acceptable quality ranges. Some organisations value RBMT’s deterministic behaviour for specific controlled-vocabulary applications.

- Development Effort: Rule-based systems require enormous manual effort from linguistic experts to create comprehensive rule sets. Neural approaches learn automatically from data, dramatically reducing development time and cost. Adding new language pairs to RBMT demands extensive linguistic analysis; NMT needs primarily parallel training data.

- Domain Specificity: RBMT works well for narrow domains with controlled terminology. Neural translation excels across broader domains, handling varied linguistic contexts more flexibly.

- Modern Applications: RBMT has largely been superseded except for specific applications requiring guaranteed terminology translation or operating with minimal training data.

NMT vs. Large Language Model Translation

Recent large language models (LLMs) like GPT-4 and Claude can translate languages as one of many capabilities. This raises questions about NMT’s future relevance versus general-purpose AI systems.

- Specialisation: NMT systems are purpose-built for translation, training exclusively on parallel corpora with translation-specific optimisation. LLMs learn translation as one task among many, potentially sacrificing specialisation for versatility.

- Consistency: Custom NMT engines maintain strict terminology consistency through integrated glossaries and translation memories. LLMs offer less deterministic control, sometimes varying translations of identical terms. For enterprise applications requiring consistent terminology, specialised NMT often outperforms general LLMs.

- Domain Adaptation: NMT fine-tunes efficiently on domain-specific parallel data. LLM adaptation through prompt engineering provides less control and may require more examples to achieve comparable domain performance.

- Cost and Speed: Specialised NMT engines typically process text faster and more cost-effectively than general LLMs. For high-volume translation, NMT’s computational efficiency provides economic advantages.

- Creative Adaptation: LLMs excel at creative translation requiring cultural adaptation beyond literal meaning. Neural MT performs better for technical accuracy but may miss creative nuances that LLMs can capture through broader training.

- Future Trajectory: The translation industry increasingly views these as complementary rather than competitive technologies. Hybrid approaches combining NMT’s consistency with LLM’s contextual understanding may represent the future direction.

How Accurate is Neural Machine Translation?

Neural machine translation accuracy varies significantly based on language pairs, content types, and model quality. Understanding current performance levels helps organisations set realistic expectations.

Overall Accuracy Metrics

In 2026, NMT systems achieve average accuracy of 94.2% across major language pairs. This represents substantial improvement over earlier approaches and continues increasing as models improve and training datasets expand. However, “accuracy” encompasses multiple dimensions—semantic correctness, grammatical fluency, and stylistic appropriateness—each measured differently.

Google Translate demonstrates variable performance by language: 94% accuracy for Spanish medical instructions, 90% for Tagalog, and 82.5% for Korean. These differences reflect training data availability, linguistic similarity to English, and grammatical complexity. High-resource language pairs with extensive parallel corpora achieve near-human performance, whilst low-resource pairs lag considerably.

Language Pair Variation

European language pairs with extensive shared training data—English-French, English-German, English-Spanish—demonstrate the highest accuracy. DeepL particularly excels at European languages, often outperforming competitors in blind tests. Asian languages show more variation: Chinese-English translation performs well due to substantial training data, whilst languages like Vietnamese or Thai with less digital content demonstrate lower accuracy.

Related languages sharing grammatical structures translate more accurately than linguistically distant pairs. Spanish-Portuguese translation achieves higher accuracy than English-Japanese due to linguistic similarity.

Content Type Impact

NMT accuracy varies dramatically by content type. Technical documentation with controlled vocabulary achieves 90%+ accuracy requiring minimal post-editing. Marketing content demanding creative language and cultural adaptation scores lower, often requiring substantial human revision. Legal documents present terminology challenges that custom-trained engines handle better than general systems.

The technology performs best with clear, unambiguous source text using standard grammar and vocabulary. Colloquialisms, slang, and creative language reduce accuracy significantly.

Evaluation Metrics

Translation quality assessment employs multiple metrics:

- BLEU (Bilingual Evaluation Understudy): Measures n-gram overlap between machine and reference translations, scoring 0-100. Scores above 50 indicate high quality, whilst scores below 30 suggest significant issues. However, BLEU emphasises surface-level matching and may miss semantic accuracy.

- COMET (Crosslingual Optimised Metric for Evaluation of Translation): Uses neural networks trained on human quality judgements to assess translations. COMET correlates better with human evaluation than BLEU, particularly for semantic accuracy and fluency.

- Human Evaluation: Ultimately, human assessment remains the gold standard. Professional translators rate adequacy (meaning preservation) and fluency (naturalness) on defined scales. Whilst expensive and time-consuming, human evaluation provides the most reliable quality measurement.

Domain-Specific Performance

Custom NMT engines trained on domain-specific data dramatically outperform general systems for specialised content. Medical translation systems trained on clinical texts achieve professional-grade accuracy for healthcare documentation. Legal engines adapted to contract language handle legal terminology effectively. Financial translation systems specialised in accounting and finance produce accurate results for quarterly reports and regulatory filings.

Organisations requiring high-quality domain-specific translation should invest in custom engine development rather than relying solely on general-purpose systems.

Improvement Trajectory

Neural translation quality improves continuously as training techniques advance and datasets expand. The technology’s accuracy has increased substantially since initial deployment in 2016. Transformer architectures, introduced in 2017, delivered significant quality improvements over earlier recurrent approaches. Current research explores multimodal translation, improved low-resource language performance, and better handling of discourse-level coherence.

Practical Implications

For business applications, NMT accuracy often proves sufficient for specific use cases with appropriate human oversight. Customer support, internal documentation, and product specifications translate with adequate quality for practical use, particularly when combined with light post-editing. Marketing campaigns, legal contracts, and high-visibility content typically require more substantial human involvement to achieve acceptable quality.

Organisations should conduct pilot projects evaluating NMT accuracy for their specific content types and language pairs before large-scale deployment. Testing with representative samples reveals whether machine translation meets quality requirements for intended applications.

What is the Future of Neural Machine Translation?

Neural machine translation continues evolving rapidly, with several transformative trends reshaping the technology landscape in 2026 and beyond.

From NMT to Large Language Models

The translation industry witnesses a significant shift from traditional neural machine translation to large language model-based approaches. Whilst NMT remains dominant, LLMs increasingly handle translation as one capability among many. These models leverage broader training—including monolingual data, multimodal inputs, and diverse tasks—to develop richer linguistic understanding.

LLM-based translation often produces more culturally nuanced output than traditional NMT. However, specialised neural systems maintain advantages for consistency, speed, and cost-effectiveness in high-volume scenarios. The future likely involves hybrid architectures combining LLM contextual understanding with NMT’s translation-specific optimisation.

Real-Time Multimodal Translation

2026 sees dramatic advances in real-time speech-to-speech translation with sub-3-second latency. Systems now translate spoken conversations instantly whilst preserving speaker voice characteristics. This enables natural multilingual meetings, customer service calls, and international negotiations without language barriers.

Multimodal translation—processing text, speech, images, and video simultaneously—emerges as a powerful capability. Systems translate street signs in augmented reality applications, subtitle videos in real-time, and convert multilingual document images seamlessly. This comprehensive approach transforms how people interact across languages in physical and digital spaces.

Adaptive and Continuous Learning

Modern systems increasingly implement adaptive MT—engines that improve continuously from user feedback. When linguists correct translations, systems incorporate those corrections, gradually reducing recurring errors. This capability proves transformative for enterprise deployments, as engines adapt automatically to organisation-specific terminology and style without manual retraining.

Continuous learning addresses traditional NMT limitations around terminology consistency and domain adaptation. Systems evolve alongside organisations, maintaining translation quality as products, services, and language evolve.

Low-Resource Language Expansion

Significant research focuses on improving translation quality for low-resource languages with limited parallel training data. Techniques like multilingual transfer learning—where models leverage knowledge from high-resource languages to improve low-resource performance—demonstrate promising results.

Meta’s NLLB-200 model, translating across 200 languages, exemplifies this expansion. Such comprehensive language coverage enables truly global communication, connecting communities previously isolated by language barriers.

Zero-Shot Translation Capabilities

Advanced neural systems demonstrate zero-shot translation—translating between language pairs never explicitly trained together. A system trained on English-French and English-German pairs can translate French-German directly by leveraging shared representations developed during training. This capability dramatically reduces training requirements for comprehensive multilingual systems.

Zero-shot performance continues improving as architectures evolve, potentially enabling translation across arbitrary language pairs with minimal direct training data.

Enhanced Context and Coherence

Future systems address current limitations around document-level coherence and discourse understanding. Rather than translating sentences independently, advanced models maintain context across paragraphs and entire documents. This evolution enables better pronoun resolution, terminology consistency, and narrative coherence in long-form translation.

Context-aware systems understand that “bank” means financial institution in one paragraph and riverbank in another based on surrounding discourse, reducing ambiguity errors that plague current sentence-level approaches.

Integration with Business Ecosystems

Translation increasingly integrates seamlessly with broader business systems. Content management platforms, customer relationship management systems, and e-commerce platforms embed translation capabilities directly into workflows. APIs enable automatic translation at content creation points, eliminating manual export-translate-import cycles.

Client portal platforms exemplify this trend, offering integrated translation management with project tracking, quality assessment, and automated workflows within unified interfaces.

Quality Estimation and Confidence Scoring

Emerging systems provide confidence scores indicating translation reliability. High-confidence segments pass through without human review, whilst low-confidence translations receive automatic flagging for expert attention. This intelligent routing optimises resource allocation, applying human expertise where it delivers maximum value.

Quality estimation reduces post-editing costs by identifying segments requiring attention before human review begins, enabling efficient hybrid workflows.

Ethical AI and Bias Mitigation

Growing awareness of translation bias drives research into fairer, more inclusive systems. Neural models can perpetuate gender stereotypes, cultural biases, and representational harms present in training data. Future developments focus on detecting and mitigating such biases, ensuring translations respect diverse perspectives and identities.

Ethical AI practices become increasingly important as translation systems influence cross-cultural communication and information access worldwide.

Recurrent vs Transformer-Based NMT

The evolution from recurrent neural networks to transformer architectures represents one of neural machine translation’s most significant advances. Understanding these approaches’ differences illuminates modern NMT capabilities.

Recurrent Neural Network Architecture

Early neural machine translation systems employed recurrent neural networks—specifically Long Short-Term Memory (LSTM) networks—to process sequences. RNNs process input words sequentially, maintaining hidden states that carry information forward through the sequence. Each word’s processing depends on previous words, creating an inherent order dependency.

This sequential processing matched intuitions about language understanding—humans read words one after another. RNN-based NMT with attention mechanisms delivered significant quality improvements over statistical approaches, establishing neural translation as viable technology.

RNN Limitations

Despite initial success, recurrent architectures faced substantial limitations. Sequential processing prevented parallelisation—each word required processing the previous word first, limiting computational efficiency. Training speed suffered accordingly, particularly for long sentences.

RNNs struggled with long-range dependencies despite LSTM improvements. Information from early sentence positions degraded as processing continued, leading to translation errors in lengthy passages. The vanishing gradient problem during training limited how effectively systems learned from distant context.

Transformer Revolution

The 2017 introduction of transformer architecture revolutionised neural machine translation. Transformers abandoned recurrence entirely, processing all input words simultaneously through self-attention mechanisms. Rather than sequential hidden states, transformers compute attention weights between every word pair, capturing relationships regardless of distance.

This “Attention is All You Need” approach delivered multiple advantages. Parallel processing dramatically accelerated training and inference. Long-range dependencies no longer posed challenges—transformers captured relationships between distant words as easily as adjacent ones. Translation quality improved substantially across benchmarks.

Self-Attention Mechanisms

Transformer self-attention computes relationships between all input positions simultaneously. When processing “The girl rides the bike,” the system simultaneously considers how each word relates to every other word. This global view enables better disambiguation and contextual understanding than sequential processing.

Multi-head attention—computing multiple attention patterns in parallel—allows transformers to capture different relationship types simultaneously. One attention head might focus on syntactic dependencies whilst another captures semantic relationships, enabling richer representation.

Performance Comparisons

Empirical comparisons consistently demonstrate transformer superiority over recurrent architectures. Transformer NMT achieves higher BLEU scores, produces more fluent translations, and handles long sentences more reliably than RNN-based systems. Training efficiency improvements prove equally significant—transformers train faster on equivalent hardware, enabling iteration and experimentation that recurrent models made impractical.

Multilingual transformer systems outperform recurrent equivalents substantially, particularly for zero-shot translation between language pairs not explicitly trained together. The transformer’s attention-based architecture better captures cross-lingual relationships that enable effective multilingual representation.

Current Dominance

Transformers now dominate neural machine translation. All major commercial systems—Google Translate, DeepL, Microsoft Translator—employ transformer architectures. Research focuses almost exclusively on transformer variations and improvements rather than recurrent approaches. The architecture’s advantages prove so substantial that RNN-based NMT effectively became obsolete within years of transformer introduction.

Ongoing Evolution

Transformer research continues advancing the architecture. Efficient transformers address computational costs for very long sequences. Sparse attention patterns reduce memory requirements whilst maintaining quality. Multilingual transformers enable increasingly effective cross-lingual transfer, improving low-resource language performance.

The transformer architecture’s flexibility enables experimentation with novel attention patterns, position encodings, and training objectives, driving continued quality improvements.

What Powers an NMT Engine: Data, Architecture, and Hardware

Neural machine translation systems depend on three critical components working in concert. Understanding these elements helps organisations appreciate NMT requirements and capabilities.

Data: The Foundation

Training data quality and quantity fundamentally determine NMT system performance. Neural networks learn translation patterns from parallel corpora—collections of text in source and target languages where sentences align one-to-one. Millions of such sentence pairs enable systems to discover grammatical structures, vocabulary mappings, and stylistic conventions.

- Data Requirements: High-quality NMT requires extensive parallel data. Major language pairs benefit from tens or hundreds of millions of sentence pairs accumulated from parliamentary proceedings, international organisations, web-crawled content, and commercial sources. Low-resource languages with limited parallel data demonstrate correspondingly lower translation quality.

- Data quality matters enormously. Clean, accurate alignments enable effective learning. Poor alignments, mistranslations in training data, and noisy web-scraped content degrade model quality. Domain-specific data proves essential for specialised applications—medical translation requires medical corpora, legal translation needs legal texts.

- Data Preprocessing: Raw parallel data requires substantial preprocessing before training. Text cleaning removes formatting artefacts, normalises punctuation, and handles character encoding. Sentence alignment ensures source and target sentences correspond correctly. Tokenisation splits text into units the neural network processes.

- Monolingual Data: Advanced training techniques leverage monolingual data—text in target languages without source translations. Back-translation, where systems translate target-language text back to source languages, creates synthetic parallel data that improves performance. This proves particularly valuable for low-resource languages where parallel data remains scarce.

Architecture: The Model

Neural network architecture defines how systems process input and generate translations. Modern NMT predominantly employs transformer architectures with encoder-decoder structure and multi-head attention.

- Encoder Design: Encoders process source language text, converting words into contextualised numerical representations. Multiple transformer layers stack attention and feed-forward components, progressively refining representations. Position encodings help models understand word order, which attention mechanisms alone don’t capture naturally.

- Decoder Design: Decoders generate target translations autoregressively—one word at a time based on previously generated words and encoder outputs. Cross-attention layers enable decoders to focus on relevant source positions when generating each target word. Masked self-attention ensures decoders only attend to previously generated positions, maintaining the auto-regressive generation process.

- Attention Mechanisms: Multi-head attention enables transformers to capture diverse relationship types simultaneously. Self-attention in encoders helps each word develop contextualised representations considering all other input words. Cross-attention in decoders connects target generation to source context, implementing translation alignment.

- Model Size: Modern systems employ billions of parameters. Larger models generally achieve better performance, capturing subtler linguistic patterns. However, size brings diminishing returns—doubling parameters doesn’t double quality. Efficient architectures aim to maximise performance per parameter, enabling strong translation with manageable computational requirements.

Hardware: The Computing Infrastructure

Neural machine translation demands substantial computational resources, particularly during training. Graphics processing units (GPUs) provide the parallel computation capabilities essential for efficient neural network training and inference.

- Training Hardware: Training production-quality NMT systems requires high-end GPUs—typically NVIDIA A100, H100, or B200 models with 80GB+ memory. Multiple GPUs working in parallel accelerate training for large models. Complete training runs may require weeks on substantial GPU clusters, consuming significant electricity.

Professional NMT development typically occurs in specialised data centres with high-performance computing infrastructure. Cloud platforms like AWS, Google Cloud, and Azure offer GPU instances for organisations without on-premises capabilities, reducing capital requirements but introducing recurring operational costs.

- Inference Hardware: Deploying trained models for actual translation requires less powerful hardware than training. A standard PC with a mid-range GPU (8GB+ memory) can run inference for many applications. Cloud-based translation APIs handle infrastructure management transparently, enabling organisations to use NMT without hardware investment.

- Optimisation Techniques: Various optimisation approaches reduce hardware requirements. Model quantisation reduces precision from 32-bit to 8-bit or lower, dramatically decreasing memory requirements with minimal quality impact. Model distillation trains smaller “student” networks that approximate larger “teacher” models’ behaviour, enabling deployment on resource-constrained devices.

- Scalability Considerations: Production deployments require infrastructure capable of handling peak loads. Real-time translation applications demand low-latency inference, necessitating sufficient computational capacity to process requests promptly. Load balancing distributes requests across multiple servers, ensuring responsive performance under varying demand.

Integration and Orchestration

Effective NMT systems integrate these components seamlessly. Training pipelines automate data preprocessing, model training, and evaluation. Continuous integration enables iterative improvement as new training data becomes available or architectures evolve. Deployment infrastructure serves trained models reliably, monitoring performance and enabling updates without service interruption.

Organisations partnering with language service providers like Elite Asia access sophisticated NMT infrastructure without internal development. Professional providers maintain training data, optimise architectures, and operate deployment infrastructure, enabling businesses to leverage advanced translation technology through simple APIs or web interfaces.

Conclusion: Embracing Neural Machine Translation for Global Success

Neural machine translation has matured into an indispensable technology for organisations operating in multilingual markets. With accuracy reaching 94.2% across major language pairs and continuous improvements driven by advancing architectures and expanding training data, NMT delivers tangible business value through faster turnaround, reduced costs, and scalable multilingual communication.

Understanding when and how to deploy neural translation strategically proves essential. The technology excels at high-volume technical content, customer support materials, and repetitive documentation where speed and consistency matter most. Combining NMT with human post-editing creates hybrid workflows that capture machine efficiency whilst ensuring human expertise addresses cultural nuances and quality verification.

As translation technology evolves toward large language models, real-time multimodal capabilities, and adaptive learning systems, organisations that integrate NMT effectively position themselves for competitive advantage in global markets. The future belongs to businesses that thoughtfully combine artificial intelligence with human expertise, leveraging each approach’s strengths whilst recognising their respective limitations.

Whether expanding e-commerce operations internationally, localising software products for global audiences, or enabling multilingual customer service, neural machine translation provides the foundation for scalable, cost-effective language solutions. Success requires strategic implementation—selecting appropriate content types, establishing quality processes, and partnering with experienced language service providers who understand both technology capabilities and linguistic requirements.

Take Your Global Communication to the Next Level

Ready to leverage neural machine translation for your business? Elite Asia’s multilingual technology solutions combine cutting-edge NMT with expert linguist networks to deliver high-quality translations at scale. Our Client Portal provides seamless access to AI-powered translation tools alongside professional post-editing services, enabling you to optimise costs whilst maintaining quality.

Contact Elite Asia today to discuss how our hybrid approach—combining advanced neural translation engines with industry-specialist post-editors—can accelerate your international growth. Transform language barriers into business opportunities with technology-enabled translation solutions designed for the modern global marketplace.